This is an important topic for SQL DBA's to be aware of, but also is important when considering initial setup for SharePoint server farms (or any other large applications).

Like many things in IT, this is an easily-avoidable problem if approached proactively and smartly.

Some background info:

Autogrowth events are performance-costly events that occur when a SQL Server data (.mdf) or log (.ldf) file must grow in order for a transaction to complete. An autogrowth event can take whole seconds – depending on the autogrowth setting and physical IO performance – which is a very long time to a SQL Server database. Worst case is when many small autogrowth events happen in sequence and are required for a transaction to commit.

Each database file has an autogrowth setting. By default this is 1mb. So a large transaction with many megabytes of data could force a filled data or log file to suffer dozens of autogrowth events before it can return successfully to the application – at which time, your application may have timed out and/or formed a big blocking chain of stacked-up transactions waiting to finish.

So what is the proper autogrowth setting for a database data or log file?

Let's stop there and point out that this isn't the right question.

The better question is: Are we proactively pre-growing our database files?

In a perfect world, all file growths should happen after hours via manual intervention by an admin – outside of an application transaction. How often is an admin going through the data and log files and proactively growing the files, so that we can avoid autogrowth events altogether?

First, we should create new database data and log files much larger than the default sizes of 4mb data file and the 1mb log file. If you pre-allocate 5gb and 200mb to each file (just as an example), your database won't suffer an autogrowth event until it hits 5gb in data or 200mb of transactional data between transaction log backups in FULL recovery mode, or 200mb of concurrent transactions at any time in SIMPLE recovery mode. Use your best judgment and keep in mind your server's storage capacity to make a realistic guess at the database's size, then monitor it regularly.

Second, a good autogrowth setting will make the performance hit of an autogrowth event as brief as possible. The autogrowth setting should depend on the performance of the physical storage subsystem. Autogrowing by a very large amount (1gb) could take far too long (whole seconds), while growing by too small an amount (1mb) will only make autogrowth events too frequent. A percentage growth setting of 10% may be appropriate for only databases of at least 100mb but smaller than 5gb. A fixed autogrowth setting of 100-500mb is likely to be accomplished fast enough by your storage subsystem to prevent multiple and frequent events.

But to repeat – ideally, autogrowth events never happen.

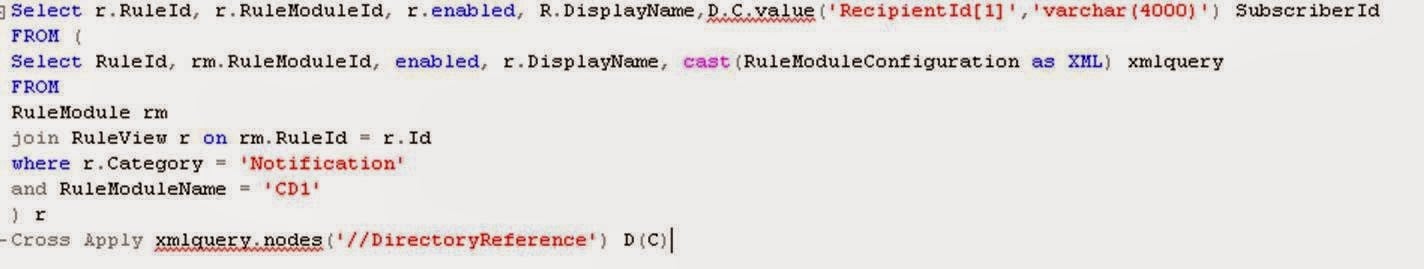

Here is an easy TSQL script to query every database file's used and available space:

exec sp_MSforeachdb 'use [?]; SELECT ''DatabaseName_____________'' = d.name ,

Recovery = d.recovery_model_desc , ''DatabaseFileName_______'' = df.name ,

''Location_______________________________________________________________________'' = df.physical_name , df.File_ID , FileSizeMB = CAST(size/128.0 as Decimal(9,2)) , SpaceUsedMB = CAST(CAST(FILEPROPERTY(df.name, ''SpaceUsed'') AS int)/128.0 as Decimal(9,2)) , AvailableMB = CAST(size/128.0 - CAST(FILEPROPERTY(df.name, ''SpaceUsed'') AS int)/128.0 as Decimal(9,2)) , ''Free%'' = CAST((((size/128.0) - (CAST(FILEPROPERTY(df.name, ''SpaceUsed'') AS int)/128.0)) / (size/128.0) ) * 100. as Decimal(9,2))

FROM sys.database_files df

cross apply sys.databases d

where d.database_id = DB_ID() and size > 0 'The underscores in the column aliases are there to help spacing in the query resultset, to allow the rightmost columns to line up nice and evenly for you in most cases.

In summary, avoid performance drags by performing proactive initial preallocation of database data and log files, plus regular manual growth of near-full files. This is important for DBA's and "accidental" DBA's to monitor on production SQL Server databases.

This is provided "AS IS" with no warranties, and confers no rights. Please always verify by testing in a non-production environment.

Information and material in our blog posts are provided "as is" with no warranties either expressed or implied. Each post is an individual expression of our Sparkies. Should you identify any such content that is harmful, malicious, sensitive or unnecessary, please contact marketing@sparkhound.com

Share this

You May Also Like

These Related Stories

Enterprise Transparent Data Encryption In SQL Server

Five Scariest SQL Server Data Types